This is a living record of my attempts to teach a computer how to cook, and will be updated as time permits. The post was originally authored 3/9/2021, dealing with work that I had done previously in 2020.

With the release of Tensorflow 2.0, it has become easier than ever to foray into machine learning for hobbyists. Early on as the Coronavirus pandemic started and I found myself with time to spare, I became interested in using a neural network for my own purposes, and thought it would be fun to mix it with one of my other hobbies: cooking.

This project can be broken into a couple of different components:

- Data collection: There are a wealth of online recipe websites that exist, for the purposes of this exercise my plan was to simply pick one where the actual recipe (not the story behind it) is easy to parse.

- Pipelining: Once data is collected, there needs to be some work to ensure that recipes can be fed into a ANN in a convenient form.

- Recurrent Neural Network: There are a number of architectures that should work for actually generating meaningful recipes. I started by using a RNN, since they are used often in Natural Language Processing text generators.

Originally, I planned to do web-scraping to get recipe data, and used python’s asynchronous libraries to build an efficient task scheduler for http requests. As it turns out, I built mine to be a bit too efficient and accidentally DDosed a recipe website for a few seconds before realizing my error and deciding to search for an already curated dataset to use my time more efficiently. I settled on a Food.com dataset hosted on kaggle. I then used an RNN based on the keras example as a template, and went to work trying to generate recipe names on a per-character basis.

Initial Network and Characterization

I started by focusing on just generating recipe names instead of recipe steps for a few reasons:

- Problem Complexity: There are more possible configurations for steps, including about twice the number of characters and many more possible words. There are also many more valid word combinations.

- Input Data Size: The total length of the steps/names combined files is approximately 124,000,000 characters. On my current hardware, it is intractable to hold that in RAM, and intractable to pre-slice the data and store it on the disk.

With my current setup (8GB RAM, 4Cores 3.4GHz, NVIDIA GeForce GTX 750 Ti), running a relatively modest network (40 input neurons, 124 LSTM neurons, and 65 dense neurons) takes about 10 hours to run a single epoch. Comparatively, running on just the names (less output neurons required, less overall data) yields an epoch time of about a few minutes, allowing me to train the network to the point of generating sane outputs in a matter of about a day or so.

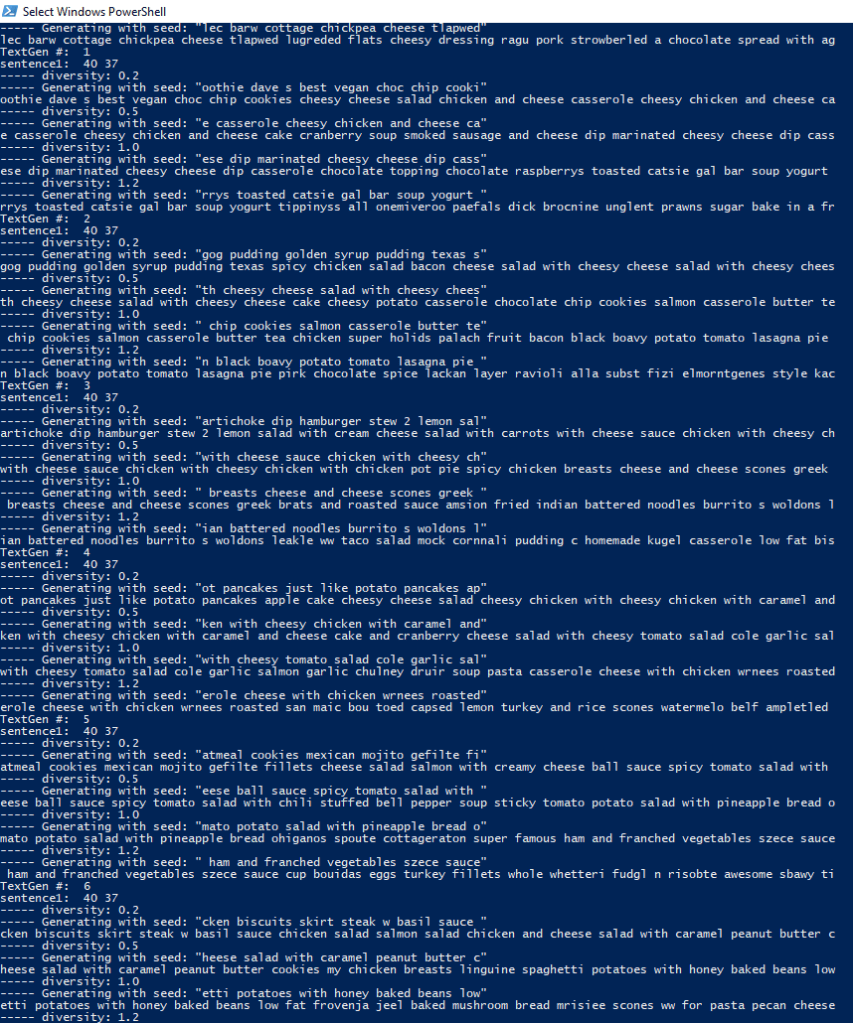

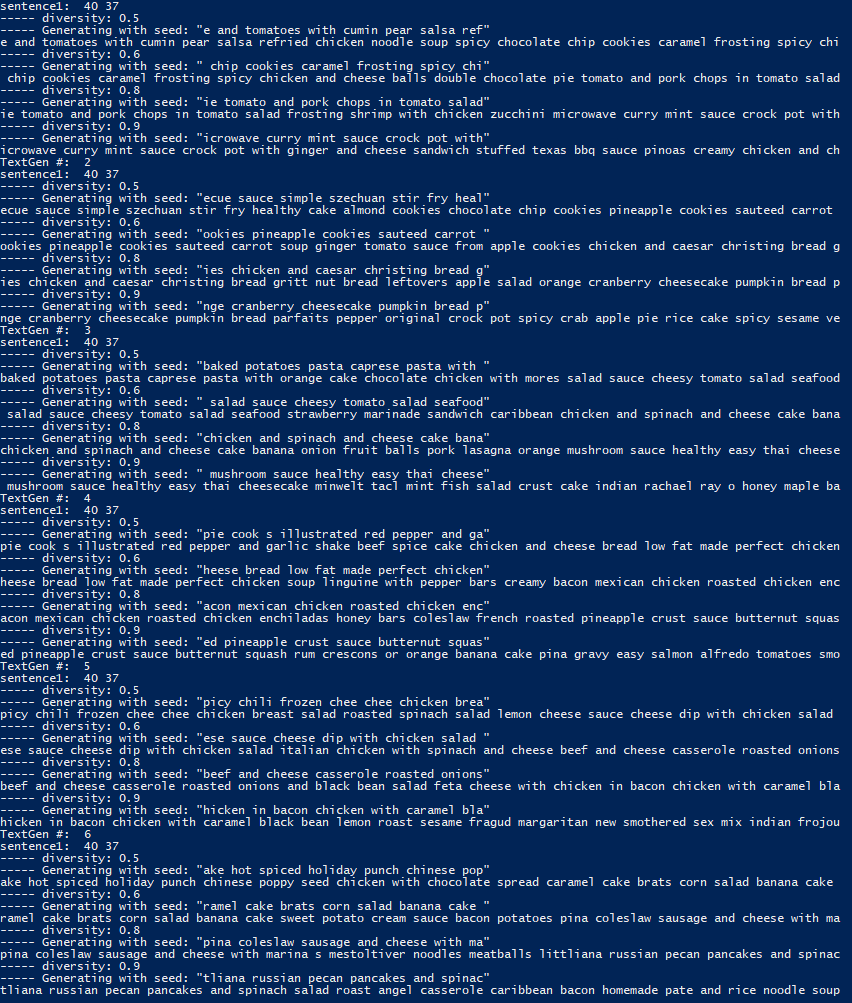

After performing several hyperparameter sweeps using a taguchi matrix to maximize my information learned per test, I eventually settled on using 50 input neurons (i.e. tracking the previous 50 characters), 500 LSTM neurons, and 39 outputs (the total number of unique characters in the corpus).

The first iteration of the model had a neat quirk- No matter what the previous inputs were, if there wasn’t a significant penalty to the relative diversity of the text output then most of the names generated were “cheesy cheese salad with cheese.” Despite that, the generated text was at least something that could be mistaken for real recipe titles.

After tooling with the diversity values a bit more, the output data looked both more sensible and less cheesy- However, it became clear that a character based RNN model was going to have difficulties retaining enough nuance to do full recipe generation if it was struggling this much with just creating names.

To more effectively learn recipes, a different method of tokenization will have to be used- once I’ve done that, I’ll update this post.